By Sascha Meinrath

Imagine purchasing “up to” a gallon of milk for US$4.50, or paying for “up to” a full tank of gas. Most people would view such transactions as absurd. And yet, in the realm of broadband service, the use of “up to” speeds has become standard business practice.

Unlike other advertisements for goods and services – for example, what a car manufacturer tells a customer about expected fuel efficiency – there are no federally set standards for measuring broadband service speeds. This means there is no clear way to tell whether customers are getting what they pay for.

Consumers typically purchase an internet service package that promises a speed up to some level – for example, 10 megabits per second, 25Mbps, 100Mbps, 200Mbps or 1000Mbps/1Gbps. But the speed you actually receive can often be much less than the advertised speed. Unlike the vehicle sector’s fuel efficiency standards, there’s no government mandate to systematically improve internet service speeds – and no national strategy for ensuring that slow connections are upgraded in a timely fashion.

A home user’s quality of service can also shift dramatically over relatively short periods of time and can become especially degraded during times of crisis. For example, during the early months of the COVID-19 pandemic when millions of Americans switched from using their office’s business-class internet connection to teleworking from home using their residential internet service, analysis showed widespread slowdowns in service speeds.

Follow-up research found that during this same time frame, the Federal Communications Commission was inundated with consumer complaints from across the country. Complaints about billing, availability and speed increased from February 2020 to April 2020 by 24%, 85% and 176%, respectively. So even though monthly bills did not change, customers experienced worse service, with lower speeds and less reliability.

The discrepancy between advertised and actual speeds also varies by geographic location. Rural areas consistently see larger discrepancies than urban areas. Broadband service descriptions are often confusing because many plans that consumers think are unlimited actually have data caps. These plans often limit data usage by slowing or “throttling” connections after users hit their caps.

Minimums and measurements

Federal Communications Commission

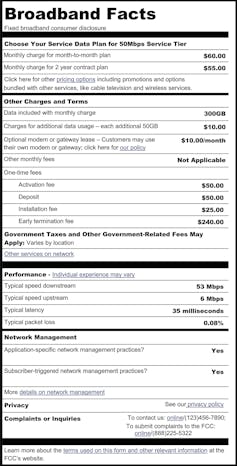

Consumer advocates have long called for a “broadband nutrition label” that would create a federal mandate for internet service providers (ISPs) to disclose speed, latency (for example, the level of delay in videoconferencing calls), reliability and pricing to potential and current consumers. The FCC is seeking comment on proposed broadband nutrition labels, and there is a risk that new labeling will be reduced to an opaque disclosure of “typical” speeds and latency.

In my view, guaranteed minimums should be a part of any residential class service offering, mirroring what is already standard contractual language for business class lines. In essence, instead of promising an “up to” ceiling, ISPs should guarantee a minimum floor for the service customers pay for.

Also, the FCC and the National Telecommunications and Information Administration can standardize and enforce the use of speed measurements that are “off net” rather than relying so heavily on “on net” metrics. On net refers to the methodology typically used by both the FCC and ISPs to measure internet speed, where the throughput of your connection is measured between your home and your ISP. This ignores off-net connections, meaning your ISP’s interconnection with everywhere outside your local provider’s network, which is virtually the entire internet.

On-net measurements also don’t document the congestion that often happens when different ISPs have a peering dispute, such as the infamous dispute between Comcast and Level 3, which led to degraded service for millions of Netflix subscribers. For many detrimentally affected customers, on-net speed tests often show no issues with their connections, even though they are experiencing major disruptions to their favorite off-net services, applications or websites.

On-net speed tests have led to claims that the median fixed broadband speed in the U.S. in May 2022 was over 150 Mbps. Meanwhile, off-net speed tests of U.S. broadband show median speeds that are quite a bit lower – median U.S. speeds for May 2022 were under 50 Mbps.

This results in a real disconnect between the way policymakers and ISPs understand connectivity, and the lived consumer experience. ISPs’ business decisions can create bottlenecks at the edges of their networks, as when they implement lower-cost, lower-speed interconnections to other ISPs. This means that their broadband speed measurements fail to capture the results of their own decisions, which allows them to claim to deliver broadband speeds that their customers often do not experience.

Transparency

To protect consumers, the FCC will need to invest in building a set of broadband speed measures, maps and public data repositories that enables researchers to access and analyze what the public actually experiences when people purchase broadband connectivity. Prior efforts by the FCC to do this have been heavily criticized as imprecise and inaccurate.

The FCC’s latest proposal for the creation of a National Broadband Map – at an estimated cost of $45 million – is already receiving criticism because its measurement process is a “black box,” meaning its methodology and data are not transparent to the public. The FCC also appears to once again rely almost entirely on ISP self-disclosure for its data, which means that it is likely to vastly overstate not only speeds, but where broadband is available as well.

The new National Broadband Map may, in fact, be far worse in terms of data access because of fairly stringent licensing arrangements under which the FCC appears to have granted control over the data – collected with public funding – to a private company to then commercialize. This process is likely to make it extremely difficult to accurately ascertain the true state of U.S. broadband.

Lack of transparency about these new maps and the methodologies undergirding them could lead to major headaches in disbursing the $42.5 billion in broadband infrastructure grant funding through the Broadband Equity, Access, and Deployment Program.

Independent analysis like the initiative from Consumer Reports, Let’s Broadband Together, is crowd-sourcing data collection of monthly internet bills from across the country. (Full disclosure: I’m an adviser to this project.) Efforts like these from consumer groups are crucial to shed more transparency on the problem that official measures differ from consumer experience. The FCC’s methodologies have been greatly inaccurate, which has hampered the nation’s ability to address the digital divide.

Reliable, fast access to the internet is a necessity to work, learn, shop, sell and communicate. Making informed telecommunications policy decisions and reining in false advertising is a matter not just of what gets measured but how it’s measured. Otherwise, it’s difficult to know whether the broadband service you get is the service you pay for.

![]()

Sascha Meinrath is Director of X-Lab and Palmer Chair in Telecommunications at Penn State.

Jimbo99 says

Throttling & power management has always been a thing with the internet. As the world becomes more battery dependent & grid power hungry this is inevitable. Any broadband has a max & an average. Even gaming benchmarks can be cheated with software code optimizations & even cheats. Anything that is utility industry service has off peak hours for the grid. This is yet another Biden thing as the hoax & lies of saving the planet as the population growth continues to grow. You can’t have 1-3% population growth and expect the carbon footprint to disappear, even though science has fabricated a concept of of carbon neutrality, Google makes these claims of being carbon neutral, yet they’ve grown exponentially as the internet grows. I want to know the numbers, the true actuals of Flagler county growth under the Biden. I mean we all see the traffic increases, the vacant lots & new houses. My residential has at least 25 new constructions, many duplexes that are a doubling of the residential lot for a head count. And even Mullins himself took credit for broadband expansion over the summer & August election. Reporting live from Jimbo’s Vineyard, this is Jimbo99 & the Palm Coast Eyewitness news team.

dave says

Is it legal for AT&T to throttle my bandwidth?

Yes.

The FCC under the Trump Administration repealed the Open Internet Order, an Obama-era regulation that forced ISPs in the USA to treat all internet traffic roughly the same way. That repeal now allows ISPs to discriminate by application, service, device, or content.

That being said, AT&T says it does not engage in discriminatory bandwidth throttling and adheres to basic net neutrality principles. has been warned about throttling .

All ISP throttle.

Danm50 says

The people own the airwaves. What about that Mr FCC?