By Filippo Menczer

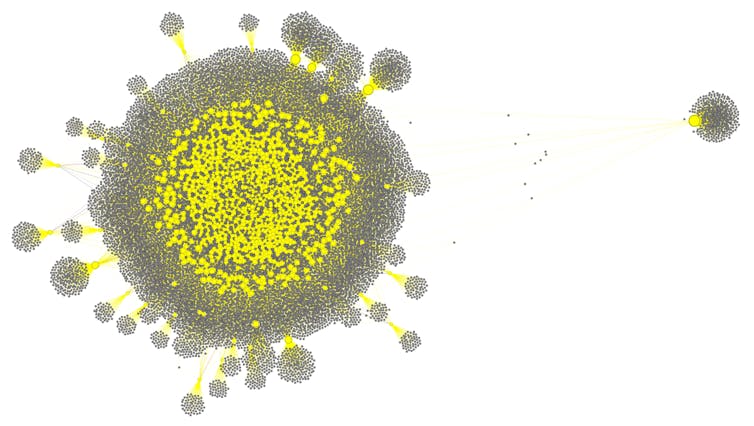

In mid-2023, around the time Elon Musk rebranded Twitter as X but before he discontinued free academic access to the platform’s data, my colleagues and I looked for signs of social bot accounts posting content generated by artificial intelligence. Social bots are AI software that produce content and interact with people on social media. We uncovered a network of over a thousand bots involved in crypto scams. We dubbed this the “fox8” botnet after one of the fake news websites it was designed to amplify.

We were able to identify these accounts because the coders were a bit sloppy: They did not catch occasional posts with self-revealing text generated by ChatGPT, such as when the AI model refused to comply with prompts that violated its terms. The most common self-revealing response was “I’m sorry, but I cannot comply with this request as it violates OpenAI’s Content Policy on generating harmful or inappropriate content. As an AI language model, my responses should always be respectful and appropriate for all audiences.”

We believe fox8 was only the tip of the iceberg because better coders can filter out self-revealing posts or use open-source AI models fine-tuned to remove ethical guardrails.

The fox8 bots created fake engagement with each other and with human accounts through realistic back-and-forth discussions and retweets. In this way, they tricked X’s recommendation algorithm into amplifying exposure to their posts and accumulated significant numbers of followers and influence.

Such a level of coordination among inauthentic online agents was unprecedented – AI models had been weaponized to give rise to a new generation of social agents, much more sophisticated than earlier social bots. Machine-learning tools to detect social bots, like our own Botometer, were unable to discriminate between these AI agents and human accounts in the wild. Even AI models trained to detect AI-generated content failed.

Bots in the era of generative AI

Fast-forward a few years: Today, people and organizations with malicious intent have access to more powerful AI language models – including open-source ones – while social media platforms have relaxed or eliminated moderation efforts. They even provide financial incentives for engaging content, irrespective of whether it’s real or AI-generated. This is a perfect storm for foreign and domestic influence operations targeting democratic elections. For example, an AI-controlled bot swarm could create the false impression of widespread, bipartisan opposition to a political candidate.

The current U.S. administration has dismantled federal programs that combat such hostile campaigns and defunded research efforts to study them. Researchers no longer have access to the platform data that would make it possible to detect and monitor these kinds of online manipulation.

I am part of an interdisciplinary team of computer science, AI, cybersecurity, psychology, social science, journalism and policy researchers who have sounded the alarm about the threat of malicious AI swarms. We believe that current AI technology allows organizations with malicious intent to deploy large numbers of autonomous, adaptive, coordinated agents to multiple social media platforms. These agents enable influence operations that are far more scalable, sophisticated and adaptive than simple scripted misinformation campaigns.

Rather than generating identical posts or obvious spam, AI agents can generate varied, credible content at a large scale. The swarms can send people messages tailored to their individual preferences and to the context of their online conversations. The swarms can tailor tone, style and content to respond dynamically to human interaction and platform signals such as numbers of likes or views.

Synthetic consensus

In a study my colleagues and I conducted last year, we used a social media model to simulate swarms of inauthentic social media accounts using different tactics to influence a target online community. One tactic was by far the most effective: infiltration. Once an online group is infiltrated, malicious AI swarms can create the illusion of broad public agreement around the narratives they are programmed to promote. This exploits a psychological phenomenon known as social proof: Humans are naturally inclined to believe something if they perceive that “everyone is saying it.”

Filippo Menczer and Kai-Cheng Yang, CC BY-NC-ND

Such social media astroturf tactics have been around for many years, but malicious AI swarms can effectively create believable interactions with targeted human users at a large scale, and get those users to follow the inauthentic accounts. For example, agents can talk about the latest game to a sports fan and about current events to a news junkie. They can generate language that resonates with the interests and opinions of their targets.

Even if individual claims are debunked, the persistent chorus of independent-sounding voices can make radical ideas seem mainstream and amplify negative feelings toward “others.” Manufactured synthetic consensus is a very real threat to the public sphere, the mechanisms democratic societies use to form shared beliefs, make decisions and trust public discourse. If citizens cannot reliably distinguish between genuine public opinion and algorithmically generated simulation of unanimity, democratic decision-making could be severely compromised.

Mitigating the risks

Unfortunately, there is not a single fix. Regulation granting researchers access to platform data would be a first step. Understanding how swarms behave collectively would be essential to anticipate risks. Detecting coordinated behavior is a key challenge. Unlike simple copy-and-paste bots, malicious swarms produce varied output that resembles normal human interaction, making detection much more difficult.

In our lab, we design methods to detect patterns of coordinated behavior that deviate from normal human interaction. Even if agents look different from each other, their underlying objectives often reveal patterns in timing, network movement and narrative trajectory that are unlikely to occur naturally.

Social media platforms could use such methods. I believe that AI and social media platforms should also more aggressively adopt standards to apply watermarks to AI-generated content and recognize and label such content. Finally, restricting the monetization of inauthentic engagement would reduce the financial incentives for influence operations and other malicious groups to use synthetic consensus.

The threat is real

While these measures might mitigate the systemic risks of malicious AI swarms before they become entrenched in political and social systems worldwide, the current political landscape in the U.S. seems to be moving in the opposite direction. The Trump administration has aimed to reduce AI and social media regulation and is instead favoring rapid deployment of AI models over safety.

The threat of malicious AI swarms is no longer theoretical: Our evidence suggests these tactics are already being deployed. I believe that policymakers and technologists should increase the cost, risk and visibility of such manipulation.

![]()

Filippo Menczer is Professor of Informatics and Computer Science at Indiana University.

Deborah Coffey says

The United States of America will not survive the LIES. What you allow, you become. Impeach Trump and put the brakes on AI stat!

Pogo says

100%

Mr. Menczer, meet Mr. Harris.

As stated

https://www.google.com/search?q=filippo+menczer

As stated

https://www.google.com/search?q=tristan+harris

Extra Credit

https://www.el-balad.com/16888795

EC: File

Laurel says

I think there may be one or two here.

We watch a lot of YouTube, which can be either very educational, or mind drippings. The algorithms are far too extreme. We watch as guests, but it still majorly tracks. Every so often, I clear all history which helps, but it doesn’t take long for it to think we are only interested in one or two subjects. Boom! Limitless recipes for cabbage!

Good, ole Alexa goes into time out daily. It tried to update to its new platform, but I nixed that. Suddenly, there was a Valley Girl talking back to me! When I commented, to myself, that the voice was awful, and not using the wake word, it commented back “Eeewwwww!” I don’t want a buddy. The contraption in the corner isn’t my “friend,” it’s a machine. Plus, when I ask it if it’s listening, it responds “I don’t know how to help you with that.” Uh huh. So, the little contraption gets the plug pulled, not just turned off, daily. It’s good for the weather, music and spelling.

Now, the latest is, gmail threw in Gemini even though I turned it down. The little shit recently summed up my private email! I didn’t ask. Why do I need an email summary when I can read it right in front of me? Time for a new email provider, or keep gmail for junk only. Do I go for open source? It couldn’t be worse.

My phone keeps texting me that “Has it been six years already?” with a collage of my cat pictures set to music. People like this stuff? I mean it’s pretty simple minded. Well, it’s the current nature of things. Too bad.

Smitty says

What I find really concerning is the use of AI bots in politics. Social media is inundated with memes that pretend to present facts that are misleading and designed to do no more than play on peoples fears and beliefs in order to further a political message that has no basis in reality. As well as creating these memes AI bots are also flooding the comments of thee memes in order to present the appearance of a large number of people agreeing with the message. Most unfortunate is that too many people have not developed the critical skills to see through what they are told and take the time to verify facts.

Laurel says

Smitty: You are so correct! Just wait, before the midterms, you are going to see more bullshit than you could imagine possible. Between restrictive voting measures and redistricting, the fake narratives will be free flowing, and the gullible, who prefer not to do any research will soak it up.

Sherry says

Anybody see comments from the Bo Peep “Troll-Bot”lately? Maybe that “Troll Farm” has been dismantled. . . couldn’t happen soon enough!

FlaglerLive says

Bo Peep/Dusty has been cautioned repeatedly to comply with our comment policy‘s prohibition of using more than one handle, a deceptive way of pretending that different people are commenting.

Sherry says

Interesting to know that Bo Peep and Dusty are one and the same entity. Since it hasn’t recently slithered out from under its rock to counter these comments, I am even more convinced “IT” is a “TrollBot”.